![How to solve the problems associated with mass production in urban NOA How to solve the problems associated with mass production in urban NOA]()

In other words, in the subsequent further changes, the strength of intelligent capabilities will directly determine the upper limit of competition for car companies. The development of today's smart cockpit can be described as changing with each passing day. New configurations/functions such as giant screens, voice outside the car, and gesture control continue to emerge. In the field of smart driving, everyone is getting increasingly involved.

Suppose you want to realize true autonomous driving. In that case, there are only two paths: from top to bottom, directly positioning to L4L5 level unmanned driving and gradually decentralizing the configuration and capabilities to achieve low-cost solutions. Some unmanned scenarios; the second is the form commonly used by OEMs today, bottom-up, mass-produce low-level intelligent driving, and continuously iterate technology through a large amount of data generated in its links to evolve to a higher level. In this route, mass production is the most critical link.

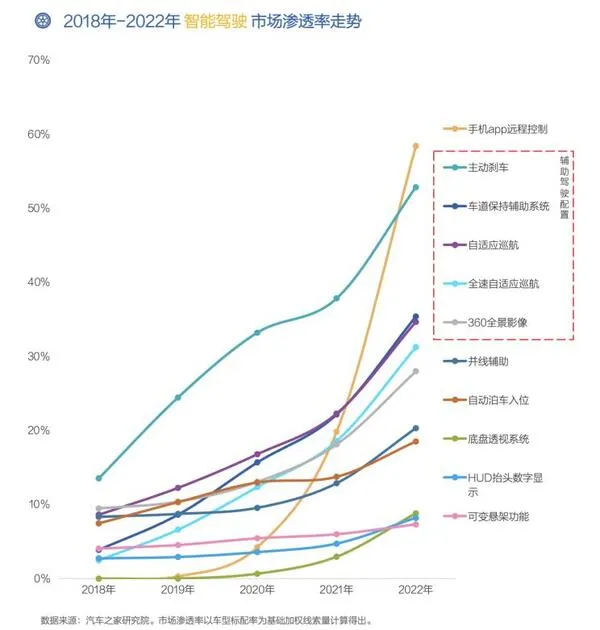

According to the "Insight into the Development Trend of Smart Cars in China" report released by Autohome Research Institute 2022, the market penetration rate of representative L2 intelligent driving functions such as active braking, lane-keeping assist system, and adaptive cruise system will grow rapidly. Even the overseas brands that were slightly conservative before have regarded some of the abovementioned functions as standard equipment for new cars.

![]()

However, very few people can achieve breakthroughs in the field that tests the "L2+" intelligent driving ability, such as urban NOA (a high-end intelligent driving assistance system for the urban domain, which is called differently by different car companies). Most companies that announced the urban NOA function are either "futures" that have not yet been fulfilled, or they are pushed in the form of internal testing, early bird, etc., and there is still a distance from real mass production. The beginning of the second half of the competition in the field of autonomous driving is also hidden in the mass mentioned above production problems.

★Route battle: from [heavy] map to [light] map

From the current point of view, there are not a few players who can set foot in the urban NOA field. Enterprises that take the bottom-up route include Haomo Zhixing, NIO, Ideal, Xiaopeng, Jidu, etc.; companies that take the top-down route include Baidu, Qingzhou Zhihang, and Pony.ai. However, judging from the launch time and promotion scale,

the urban NOAs of these car companies are mostly concentrated in the three cities of Guangzhou, Shenzhen, and Shanghai.

![]()

For example, Xiaopeng, in September 2022, Xiaopeng City NGP intelligent navigation-assisted driving officially launched a pilot program in Guangzhou. The first batch of users to be pushed was randomly selected from P5 car owners who had submitted intelligent assisted driving suggestions in Guangzhou. They had to experience "Novice mode" - using urban NGP for more than 100 kilometers on some road sections with suitable conditions, and you can unlock all road sections after more than seven days. After some time, this function was gradually opened in Shenzhen and Shanghai.

Jihu, which once became popular all over the Internet with its "autonomous driving" video on the eve of a certain Shanghai Auto Show, although it delivered the Jihu Alpha S HI version in May 2022, it did not start the urban NCA function test in Shenzhen until September—and then extended to Shanghai.

The early urban NOAs were concentrated in Guangzhou, Shenzhen, and Shanghai because the above three cities were the first batch of cities in China to issue high-precision map city pilot licenses. Unlike the high-speed domain, the complex road conditions faced by high-end intelligent driving in the urban domain are increasing exponentially, such as signal light changes, tidal lane changes, pedestrian trajectory prediction on the road surface, and non-motor vehicle trajectory prediction... All these scenarios are very important for enterprise software and hardware. Comprehensive ability requirements are extremely high. According to data, the number of Xpeng urban NGP perception models has reached four times that of high-speed NGP, and the amount of code related to prediction/planning/control has increased to 88 times, which shows its complexity.

![]()

In the face of the above complex scenarios, when the comprehensive capabilities of software and hardware are not so strong, the absolute accuracy and relative accuracy are within 1 meter, which contains road information such as road type, curvature, lane line position, as well as roadside infrastructure, obstacles, traffic, etc. Environmental object information such as signs, real-time dynamic information such as traffic flow and traffic light status information, high-precision, high-freshness, and high-rich high-precision maps, has become a "shortcut" for car companies to launch urban NOA quickly.

But everything has two sides. The limitations of high-precision maps and the difficulty of obtaining qualifications have also become the key reasons for restricting the rapid mass production of car companies NOA. At the 2023 China Automobile Forum, Li Wei, chief expert of Chongqing Changan Automobile Co., Ltd., once analyzed the disadvantages of the "map-heavy" model. He believes that this model is an incremental investment. Although the high-speed + small amount of urban data procurement cost is not high in the early stage, the procurement cost will increase sharply as the city expands in the later stage. It also faces the long-term problems of insufficient map freshness and coverage, which will inevitably lead to The intelligent driving system's poor robustness.

![]()

"Schematic diagram of high-precision map"

As for how high its long-term cost is, Yu Chengdong, executive director of Huawei, CEO of terminal BG, and CEO of smart car solution BU, once cited an example: "Just collecting high-precision maps of Shanghai for one or two years and 9,000 kilometers did not capture Shanghai is fully covered. And from the national security perspective, refreshes are only allowed for a few months. Still, China's roads are changing daily, so relying on high-definition maps cannot be widely used." Because of this, the industry has gradually reached a consensus ——Demapping may be the only way to achieve mass production of urban NGP quickly.

![]()

Momo Zhixing is undoubtedly the first batch of companies to play the signboard of "emphasis on perception" in the industry. As early as 2022, Momo Zhixing officially announced a mass-produced model equipped with the HPilot 3.0 system, which can realize the city NOH pilot assistance function; in April this year, on the eighth Momo AI DAY, the company announced the new "Carrier"—Wei's new Mocha DHT-PHEV and Wei's Lanshan. According to the current plan, the urban NOH function of Momo Zhixing will be the first to be implemented in Beijing, Shanghai, Baoding, and other cities.

![]()

In addition to Momo, companies such as Xiaopeng and Huawei that previously relied on high-precision maps have also begun to undergo "re-sensing" evolution. Among them, Xiaopeng's XNGP has been tested without maps and is expected to expand to 50 cities by the end of the year; Yu Chengdong announced that Huawei's urban NCA that does not rely on high-precision maps will be implemented in 15 cities in the third quarter, and will increase by the fourth quarter. To 45 cities. Even the map giant Baidu is already moving closer to the re-sensing solution, and its ANP3.0 system has already adopted the "BEV Surround View 3D Perception" technology as a safety redundancy.

★Technology battle: [data capability] is the threshold

According to the forecast released by Western Securities, NOA in the future city will be a big cake, and the number of its models may reach 119,000, 676,000, and 2.436 million in 2023-2025. But if you want to eat more of this cake, it is not just as simple as determining the route.

![]()

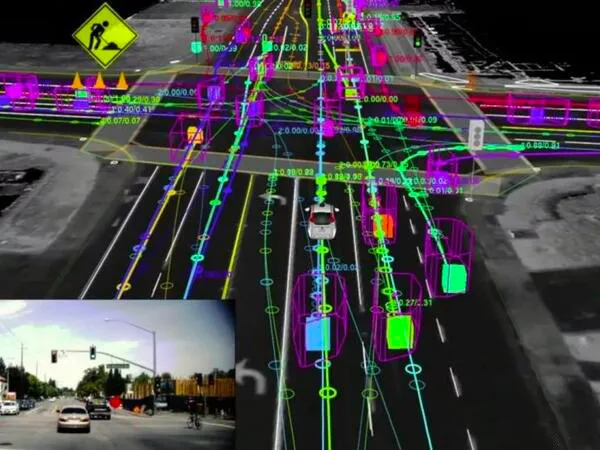

When there is no or no reliance on high-precision maps, such as processing the data recognized by sensors, and through it, a larger-scale urban generalization can be achieved, adapting to the "messy" road conditions and scenes in different cities. Take the most basic functions of identifying traffic lights to control cars and noticing traffic lights, which may seem very basic to some people, as an example. The specifications of traffic lights in different cities in my country are not the same; there are three rows of lights, five rows of lights, some are horizontal, and some are vertical... Leaving aside the algorithm, the scale of the collected data will be the same as before to meet the above scenarios—exponential growth.

With this data scale, it seems that relying on the CNN convolutional neural network training model is no longer applicable as before. In this regard, Tesla, which took the lead in proposing to get rid of high-precision maps and even cancel lidar, and adopted a pure vision solution, made a good start for everyone and began to replace CNN with Transformer large models, taking advantage of its simple structure and infinitely stackable basic units. Get the characteristics of a huge number of parameters to improve.

Compared with CNN, the larger the amount of data in the Transformer, the better its effect. Studies have shown that when the training data set is increased to include 100 million images, the performance of Transformer begins to exceed that of CNN. And when the number of images increases to 1 billion, the performance gap between the two becomes even larger.

Momo Zhixing is China's first autonomous driving company to introduce the large Transformer model. Although it is not as early as Tesla in terms of time, the innovation of Momo Zhixing lies in the pre-fusion of time and space with the Transformer.

![]()

For example, facing a section of a normal road, the human eyes see a two-way, four-lane, continuous visual image. Still, the recognition by the camera is not coherent but a frame-by-frame picture. Assuming that the car is 5 cm to the left, the information recognized by the person is normal and can be straightened. Under the original scheme, camera recognition is complicated. Maybe the road itself is "crooked." If there is no high-precision map to correct it, the system may become "dry" and cannot be effectively spliced.

And the final method is to use Transformer to do the pre-fusion in time and space, that is, through the attention feature of the large model, extract the correlation between different picture pixels, use its feature vector to perform pre-fusion, and then use the neural network to perform target prediction. This can not only solve the problem that multi-angle cameras cannot splice out the "God's perspective" but can even integrate lidar data to supplement the visual effect.

In this regard, Perxing, technical director of Momo Zhixing, once used the lane line scene that is most likely to appear in the urban field as an example. He said that, unlike the high-speed scene, the lane lines of urban roads are extremely complicated. Some places have been worn away and may be repainted, but the old lane lines have not been completely eradicated. In this scenario, the attention mechanism of the large transformer model can solve the problem very well.

![]()

It is worth noting that NOA faces many similar scenarios in the urban domain. For example, if multiple red lights exist at a complex intersection, which light should the vehicle go on? Suppose you want to solve tens of thousands of scenarios like this. In that case, you have to identify a lot of data, do a lot of labeling, simulate many scenarios, do a lot of learning, make a lot of adjustments, and write many rules...

How to solve the "large amount" mentioned above? In the words of Gu Weihao, CEO of Momo Zhixing, the industry has been engaged in it for 20 years. Each item of forecasting, planning, decision-making, and control is divided into small tasks and has not been completed in 20 years. Until the GPT (Generative Pre-trained Transformer) technology began to be applied.

On April 11 this year, Momo Zhixing officially released DriveGPT, a large-scale generative model for autonomous driving. For the driving scene, use the text sequence after perception fusion as input, and use the text sequence of the autonomous driving scene as the output to tokenize the autonomous driving scene to form a "Drive Language," and finally complete the decision-making control, obstacle prediction, and decision-making logic chain of the self-vehicle output and other tasks. In layperson's terms, all the abovementioned small tasks are reduced to two large tasks: perception and cognition.

![]()

In addition, Momo Zhixing has also begun to explore four major application capabilities with ecological partners, including intelligent driving, driving scene recognition, driving behavior verification, and difficult scene escape. For example, in scene recognition, the overall labeling cost of a single-needle image of DriveGPT is only equivalent to 1/10 of the industry. The open use of this technology in the industry will greatly reduce the cost of using data, thereby enhancing the development of autonomous driving technology.

Momo can gradually apply DriveGPT to urban NOH, shortcut recommendations, smart sparring, and escape scenarios. Adding DriveGPT can make the vehicle's driving safer, and the regulation and control actions are more humane and smoother. There is a reasonable logic to tell the driver why the vehicle chooses such a decision-making action.

And this is the map removal scheme Momo gave, which tests data processing capabilities. And this kind of processing capacity, coupled with the amount of autonomous driving data—that is, whoever mass-produces the car first, collects more data, processes it through the mass-produced car, and finally realizes the technical iteration of snowballing. This is the urban NOA function where the threshold is.

Write at the end:

High-precision maps are costly, difficult to collect, and poor in freshness. Implementing vehicle-road coordination solutions that rely on large infrastructure is more difficult than high-precision maps. Under this premise, if you want to mass-produce urban NOA and realize a positive cycle, It is not easy.

According to Zhang Kai, chairman of Momo Zhixing, the reason why the company can quickly achieve mass production is nothing more than a few closed loops: closed loop of user needs - continuous analysis of driving scene data to improve strategies, and feedback on new function experience; Closed loop of R&D efficiency - Integrating data-driven concepts into product development processes such as product demand definition, perception and cognitive algorithm development, etc., improving overall development efficiency; Closed loop of data accumulation - Deploying diagnostic service data scene tags on the car end covers 92% of driving Scenario; data value closed loop - large model continues to mine data value to solve key problems; product self-improvement closed loop - realizes after-sales problem processing speed is ten times higher than traditional methods, and realizes after-sales problems can be located in the fastest 10 minutes; business engineering closed loop ——Further improve the closed-loop engineering process of product R&D from collection and reflow, labeling training, system calibration, simulation verification and other links to the final OTA release link. The abovementioned closed loop is already quite complicated just by looking at it, and it is even more difficult to realize. This kind of threshold has screened out many players.

![]()

In this regard, even if the volume (only refers to smart cars) is as large as Tesla, it is necessary to expand the data collection capacity by opening FSD to other car companies. In this regard, Momo Zhixing, which relies on the Great Wall and gradually expands its circle of friends, and Huawei, which provides solutions to many OEMs, also seem to have a certain scale advantage. I believe that under such challenges, there will be fewer and fewer OEMs struggling with the "soul theory." After all, in the urgent environment of competition in the second half of autonomous driving, such data volume and technical capabilities cannot be solved by self-development and acquisitions.